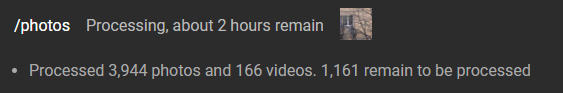

I last used PhotoStructure a few months ago. I upgraded to 1.0.0-beta.3 and it wanted to rebuild my library. It said “around 12 hours remain” so I left my PC on overnight. I came back the next morning and it had barely progressed. There were ~43,000 items remaining last night, and 12 hours later there’s still 42,400 items remaining.

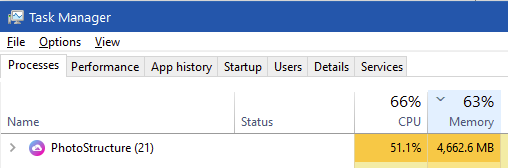

The system I’m running it on isn’t the fastest or newest thing in the world (Core i5 6500), but older versions were definitely faster to rebuild. I only have 16GB RAM though. I’ve noticed that PhotoStructure’s RAM usage will grow until it reaches around 4.6GB:

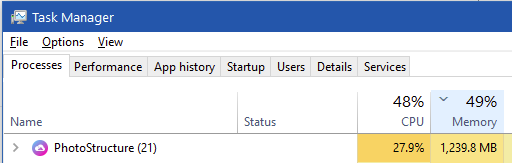

then it will rapidly drop down to ~1GB:

so my guess would be that some process is hitting an out-of-memory error. There’s several instances of something that looks like a Node.js OOM error in the sync logs:

{“ts”:1622309186526,“l”:“warn”,“ctx”:“Error”,“msg”:“onError(): Error: onStderr(\r\n<— Last few GCs —>\r\n\r\n[21592:0000494300000000] 131018 ms: Scavenge 3829.6 (3967.3) → 3829.6 (3967.3) MB, 115.9 / 0.0 ms (average mu = 0.598, current mu = 0.430) allocation failure \r\n[21592:0000494300000000] 131139 ms: Scavenge…\nError: onStderr(\r\n<— Last few GCs —>\r\n\r\n[21592:0000494300000000] 131018 ms: Scavenge 3829.6 (3967.3) → 3829.6 (3967.3) MB, 115.9 / 0.0 ms (average mu = 0.598, current mu = 0.430) allocation failure \r\n[21592:0000494300000000] 131139 ms: Scavenge 3829.6 (3967.3) → 3829.6 (3967.3) MB, 120.2 / 0.0 ms (average mu = 0.598, current mu = 0.430) allocation failure \r\n[21592:0000494300000000] 131256 ms: Scavenge 3829.6 (3967.3) → 3829.6 (3967.3) MB, 117.8 / 0.0 ms (average mu = 0.598, current mu = 0.430) allocation failure \r”,“meta”:{“event”:“nonFatal”,“message”:“sync-file: internal error”}}

Sync log: http://d.ls/photostructure/sync-5540-002.log

When I get a chance, I might try it on my work PC instead, which is a beast of a machine (32-core Threadripper 3970X and 128 GB RAM) and see how it goes there instead.

System information

Version 1.0.0-beta.3

Edition PhotoStructure for Desktops (beta channel)

Plan plus

OS Windows 10 (10.0.19043) on x64

PowerShell 5.1.19041

Electron 12.0.8

Video support FFmpeg 4.2.3

HEIF support heif-convert: not installed

Free memory 6.4 GB / 17 GB

CPUs 4 × Intel(R) Core™ i5-6500 CPU @ 3.20GHz

Concurrency Target system use: 75% (3 syncs, 2 threads/sync)

Web uptime 12 hours, 30 minutes

Library metrics 26,966 assets

79,718 image files

778 video files

1,372 tags

Library path D:\PhotoStructure

Health checks

- Library and support directories are OK

- Used memory used by web (26 MB) is OK

- RSS memory used by web (78 MB) is OK

Volume information

| mount | size | free | volume id | label |

|---|---|---|---|---|

| C:\ | 1000 GB | 48 GB | 2BNf9oGmo | |

| D:\ | 1 TB | 61 GB | 2qFb6q6JU | Data |